Zscaler Deception

Deception is used to deploy lures throughout customer network of various types (fake AD servers/DB servers/fake password files/fake browser cookies etc) and of an attacker breaches customer network, these lures come across as lowest hanging fruits to the attacker.

And as the attackers engage with them, that generates a high fidelity alert, that can be sent to the SIEM. This package adds high value security data to LogScale to correlate with FLTR data.

The parser normalizes data to a common schema based on CrowdStrike Parsing Standard (CPS) 1.0. This schema allows you to search the data without knowing the data specifically, and just knowing the common schema instead. It also allows you to combine the data more easily with other data sources which conform to the same schema.

Breaking Changes

This update includes parser changes, which means that data ingested after upgrade will not be backwards compatible with logs ingested with the previous version.

Updating to version 1.0.0 or newer will therefore result in issues with existing queries in for example dashboards or alerts created prior to this version.

See CrowdStrike Parsing Standard (CPS) 1.0 for more details on the new parser schema.

Follow the CPS Migration to update your queries to use the fields and tags that are available in data parsed with version 1.0.0.

Installing the Package in LogScale

Find the repository where you want to send the Zscaler Deception events, or Creating a Repository or View.

Navigate to your repository in the LogScale interface, click Settings and then on the left.

Click and install the LogScale package for Zscaler Deception (i.e. zscaler/deception).

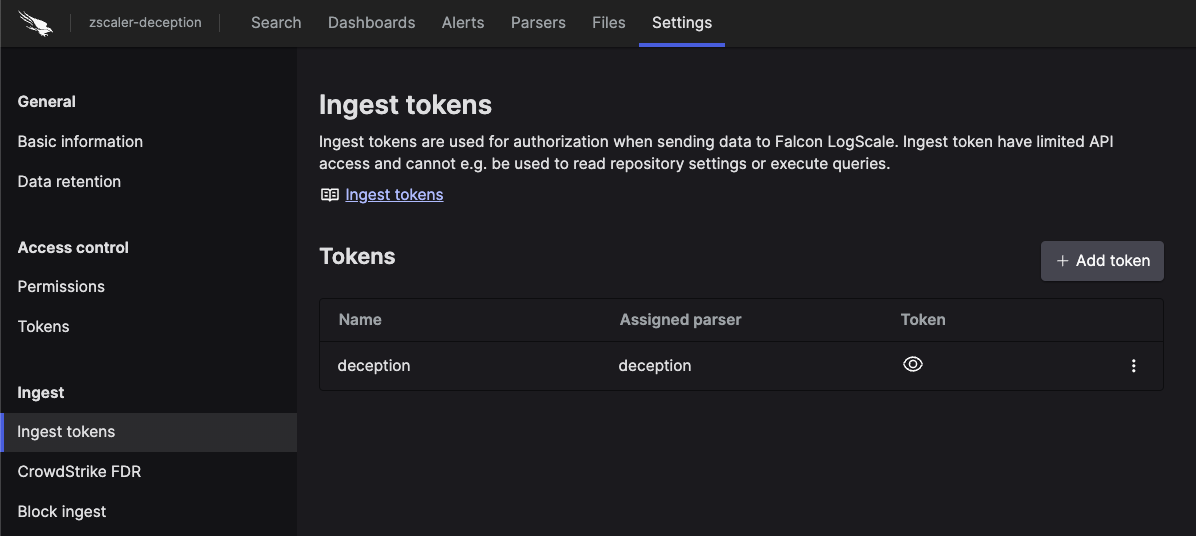

When the package has finished installing, click on the left still under the .

In the right panel, click to create a new token for each parser. Give the token an appropriate name (e.g. the name of the log it will collect logs from), and assign the parser deception to the token.

Before leaving this page, view the ingest token and copy it to your clipboard — to save it temporarily elsewhere.

Now that you have a repository set up in LogScale along with an ingest token you're ready to send logs to LogScale.

Configurations and Sending the Logs to LogScale

Install the package in the LogScale repository, and create an ingest token assign the parser. as described above.

In the Zscaler Deception console add a new Syslog integration and configure it with your LogScale Collector's IP address, TCP port.

Configure LogScale Collector as Syslog Receiver as described in the documentation, Syslog Receiver to forward logs to your LogScale repository.

Note that events might be truncated if they exceed the default limit of 2048 bytes and the limit should be increased to 1MB (maxEventSize: 1048576)

Verify Data is Arriving in LogScale

Once you have completed the above steps the Zscaler Deception data should be arriving in your LogScale repository.

You can verify this by doing a simple search for

#Vendor = "zscaler" |

#event.module="deception" to see the events.

Package Contents Explained

This package parses incoming data, and normalizing the data as part of that parsing. The parser normalizes the data to CrowdStrike Parsing Standard (CPS) 1.0 schema based on OpenTelemetry standards, while still preserving the original data.

If you want to search using the original field names and values, you can access those in the fields whose names are prefixed with the word Vendor. Fields which are not prefixed with Vendor are standard fields which are either based on the schema (e.g. source.ip) or on LogScale conventions (e.g. @rawstring).

The fields which the parser currently maps the data to, are chosen based on what seems the most relevant, and will potentially be expanded in the future. But the parser won't necessarily normalize every field that has potential to be normalized.

Event Categorisation

As part of the schema, events are categorized by fields:

event.category

event.kind

event.type

event.category is an array so needs to be searched using the following syntax:

array:contains("event.category[]", value="network")

For example, the following will find events where some

event.type[n] field contains the value

network, regardless of what

n is.

Note that not all events will be categorized to this level of detail.

Normalized Fields

Here are some of the normalized fields which are being set by this parser:

ecs.* (e.g ecs.version)

event.* (e.g event.module, event.category, event.type, event.id, event.risk, event.kind)

process.* (e.g process.name, process.user.name, process.pid, process.command)

network.* (e.g network.protocol)

http.* (e.g http.request.method, http.response.status)

log.* (e.g log.syslog.appname, log.syslog.priority, log.syslog.procid, log.syslog., log.syslog.version, log.syslog.structured, log.syslog.hostname, log.syslog.msgid, log.message)

tls.* (e.g tls.version, tls.cipher)

threat.* (e.g threat.indicator.type, threat.indicator.port, threat.indicator.name, threat.indicator.ip)

source.* (e.g source.port, source.ip)

url.* (e.g url.full, url.scheme, url.domain)

user.* (e.g user.name)

user_agent.* (e.g user_agent.name, user_agent.string)