Network Security Configuration

The OCI deployment uses security lists and Network Security Groups (NSGs) to control network access. This section covers the complete network architecture for DR deployments.

VCN Network Architecture

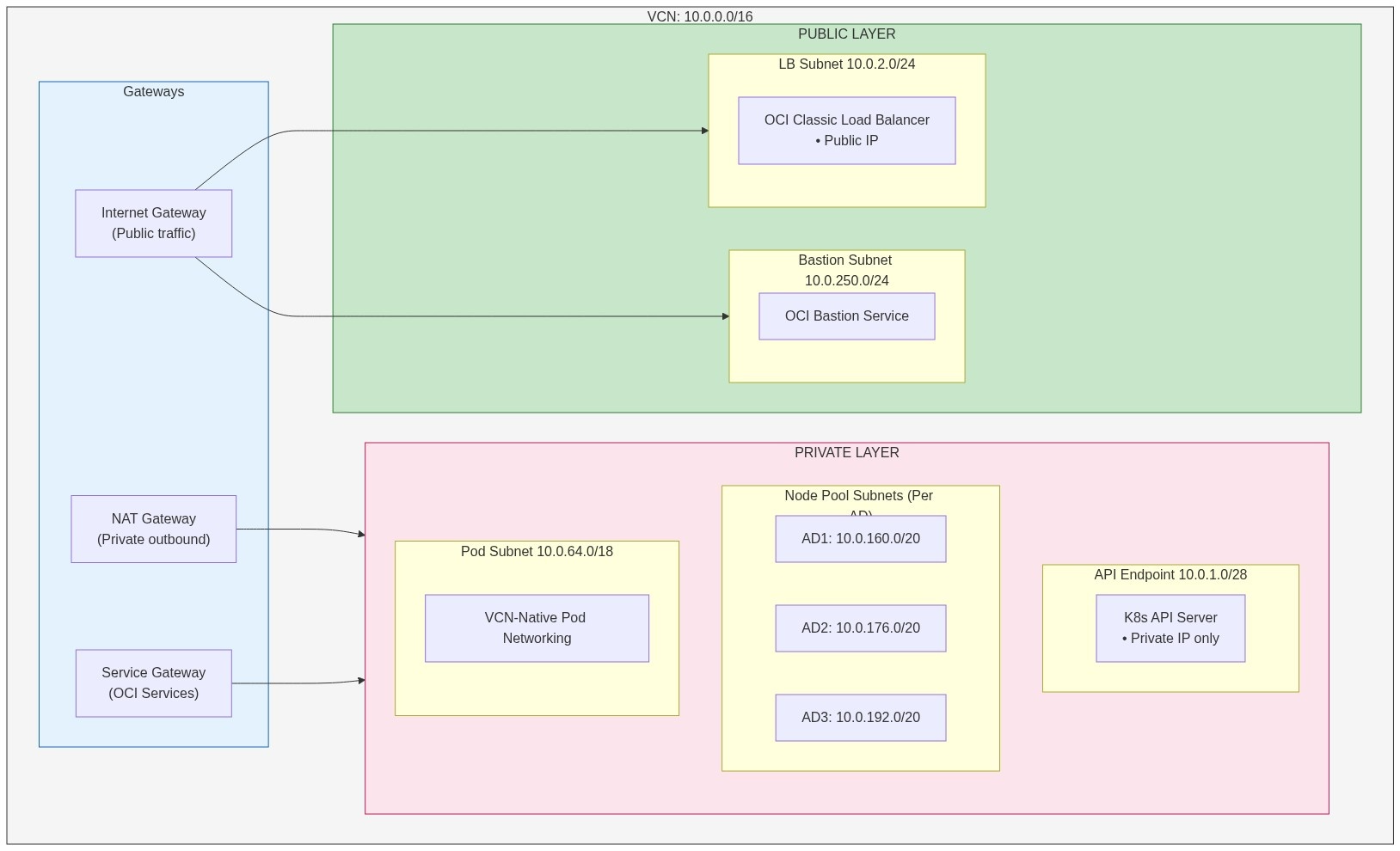

Each DR cluster (primary and secondary) has its own VCN with the following architecture:

|

Subnet Configuration

| Subnet | CIDR | Type | Route Table | Purpose |

|---|---|---|---|---|

${cluster_name}-lb-subnet

| 10.0.2.0/24 | Public | public-rt (IGW) | OCI Classic Load Balancer |

${cluster_name}-bastion-subnet

| 10.0.250.0/24 | Public | bastion-enhanced-rt (IGW) | OCI Bastion Service |

${cluster_name}-cluster-endpoint-subnet

| 10.0.1.0/28 | Private | private-rt (NAT+SGW) | Kubernetes API endpoint |

${cluster_name}-node-pool-ad1

| 10.0.160.0/20 | Private | private-rt (NAT+SGW) | Worker nodes AD1 |

${cluster_name}-node-pool-ad2

| 10.0.176.0/20 | Private | private-rt (NAT+SGW) | Worker nodes AD2 |

${cluster_name}-node-pool-ad3

| 10.0.192.0 | Private | private-rt (NAT+SGW) | Worker nodes AD3 |

${cluster_name}-pod-subnet

| 10.0.64.0/18 | Private | private-rt (NAT+SGW) | VCN-native pod IPs |

Route Tables

Public Route Table

(${cluster_name}-public-rt):

| Destination | Target | Purpose |

|---|---|---|

| 0.0.0.0/0 | Internet Gateway | Public internet access |

Private Route Table

(${cluster_name}-private-rt):

| Destination | Target | Purpose |

|---|---|---|

| 0.0.0.0/0 | NAT Gateway | Outbound internet via NAT |

| All Oracle Services | Service Gateway | OCI Services access |

Bastion Enhanced Route Table

(${cluster_name}-bastion-enhanced-rt):

| Destination | Target | Purpose |

|---|---|---|

| 0.0.0.0/0 | Internet Gateway | Bastion service connectivity |

Network Security Groups (NSGs)

Four NSGs control traffic flow between the internet, load balancer, worker nodes, and Kubernetes API.

The most common networking issue is TLS timeouts when accessing the load balancer from the internet. This happens when the Load Balancer NSG has an egress rule to send traffic to worker nodes, but the Worker NSG is missing the corresponding ingress rule to accept that traffic.

Both rules are required because OCI NSG rules are unidirectional.

Quick fix for TLS timeout: Ensure the

Worker NSG has an ingress rule from the LB NSG on ports 30000-32767 (see

worker_ingress_from_lb_nsg rule in section 2 below).

Ingress Rules:

| Protocol | Source | Ports | Description |

|---|---|---|---|

| ALL | worker-nsg | All | Worker-to-worker communication |

| ALL | bastion-nsg | All | Bastion access to workers |

| TCP | OCI Services | 10250 | OKE control plane to kubelet |

| TCP | OCI Services | 10255 | OKE control plane extended |

| TCP | OCI Services | 12250 | OKE control plane communication |

| TCP | OCI Services | 22 | OKE installation |

| TCP | VCN CIDR | 22, 80, 443, 6443, 10250, 10255 | Internal cluster traffic |

| TCP | VCN CIDR | 30000-32767 | NodePort range for LB health checks |

| ICMP | VCN CIDR | Type 3, Code 4 | Path discovery |

Egress Rules:

| Protocol | Destination | Port(s) | Description |

|---|---|---|---|

| ALL | 0.0.0.0/0 | All | General internet access (via NAT) |

| TCP | OCI Services | 443, 12250 | OKE services communication |

| TCP | 0.0.0.0/0 | 6443 | Kubernetes API |

| UDP | 0.0.0.0/0 | 53 | DNS resolution |

| UDP | 0.0.0.0/0 | 123 | NTP time sync |

Ingress Rules:

| Protocol | Source | Port(s) | Description |

|---|---|---|---|

| TCP |

public_lb_cidrs

| 443 | HTTPS from allowed CIDRs |

| TCP |

public_lb_cidrs

| 80 | HTTP from allowed CIDRs |

Egress Rules:

| Protocol | Source | Port(s) | Description |

|---|---|---|---|

| TCP | worker-nsg | 30000-32767 | LB to worker NodePort services |

Important

NSG Rules Are Unidirectional

OCI NSG rules are unidirectional - having an egress rule on NSG-A to NSG-B does not automatically allow the traffic. NSG-B must have a corresponding ingress rule from NSG-A

|

Required Rule Pair for LB→Worker Traffic:

| NSG | Direction | Source/Dest | Ports | Rule Name |

|---|---|---|---|---|

| public-lb-nsg | EGRESS | worker-nsg | 30000-32767 |

public_lb_egress_nodeport

|

| worker-nsg | INGRESS | public-lb-nsg | 30000-32767 |

worker_ingress_from_lb_nsg

|

Without

worker_ingress_from_lb_nsg: TLS handshake

times-out because the LB can send traffic, but the worker NSG blocks it at

the receiver.

Terraform code

(modules/oci/core/main.tf):

resource "oci_core_network_security_group_security_rule" "worker_ingress_from_lb_nsg" {

network_security_group_id = oci_core_network_security_group.worker.id

direction = "INGRESS"

protocol = "6" # TCP

source = oci_core_network_security_group.public_lb.id

source_type = "NETWORK_SECURITY_GROUP"

description = "Allow NodePort traffic from public LB NSG"

tcp_options {

destination_port_range {

min = 30000

max = 32767

}

}

}Ingress Rules:

| Protocol | Source | Port(s) | Description |

|---|---|---|---|

| TCP | VCN CIDR | 6443, 22 | Internal cluster access |

| TCP |

control_plane_allowed_cidrs

| 6443 | External K8s API access |

Egress Rules:

| Protocol | Destination | Port(s) | Description |

|---|---|---|---|

| ALL | 0.0.0.0/0 | All | All outbound traffic |

Ingress Rules:

| Protocol | Source | Port(s) | Description |

|---|---|---|---|

| TCP |

bastion_client_allow_list CIDRs

| 22 | SSH from allowed IPs |

Egress Rules:

| Protocol | Destination | Port(s) | Description |

|---|---|---|---|

| ALL | 0.0.0.0/0 | All | All outbound traffic |

| TCP | VCN CIDR | 22 | SSH to VCN resources |

| ICMP | VCN CIDR | All | ICMP to VCN |

Load Balancer Access Control (public_lb_cidrs)

The public_lb_cidrs variable controls which IP ranges

can access the load balancer over HTTPS (port 443). This is a security

measure to restrict access to known IP ranges (e.g., corporate networks,

VPNs)

# Example: Restrict LB access to specific IP ranges

public_lb_cidrs = [

"10.110.192.0/22", # Internal network

"130.41.55.146/32", # Specific external IP

"208.127.86.195/32", # Another external IP

]

Impact on certificate validation: - When

public_lb_cidrs restricts access, Let's Encrypt HTTP-01

validation fails because Let's Encrypt cannot reach port 80 -

Solution: Use DNS-01 validation instead

(see DNS-01

Certificate Issuance in the DR Operations

Guide).

Bastion Service Access Control (bastion_client_allow_list)

The bastion_client_allow_list variable controls which IP

ranges can connect to the OCI Bastion Service for cluster access. This is

separate from the load balancer security list.

Bastion is enabled by default

(provision_bastion = true) for maximum security. To disable

bastion and use public endpoint access instead, set

provision_bastion = false and endpoint_public_access =

true.

Required when

provision_bastion=true (default). The list cannot

include 0.0.0.0/0.

# Example: Restrict bastion access to specific IPs

bastion_client_allow_list = [

"203.176.185.196/32", # Your IP

"147.161.213.22/32", # VPN endpoint

]Kubernetes API Access Control (control_plane_allowed_cidrs)

The control_plane_allowed_cidrs variable controls which

IP ranges can access the Kubernetes API (port 6443) via the API endpoint

NSG.

Required when

endpoint_public_access=true. The list cannot

include 0.0.0.0/0.

# Example: Restrict K8s API access to specific IPs

control_plane_allowed_cidrs = [

"10.0.0.0/16", # VCN CIDR (always included)

"203.176.185.196/32", # Your IP for direct API access

]Kubernetes API Access Modes

OKE clusters support two access modes for the Kubernetes API endpoint:

| Access Mode | provision_bastion | endpoint_public_access | Security Level |

|---|---|---|---|

| Bastion Tunnel |

true

|

false

| Higher (no public exposure) |

| Public Endpoint |

false

|

true

| Medium (IP allowlist) |

Bastion Tunnel Mode (Recommended for Production):

API endpoint is private (VCN only)

Access via OCI Bastion Service SSH tunnel

Requires

bastion_client_allow_list configurationTerraform commands need:

-var="kubernetes_api_host=https://127.0.0.1:<port>"

Public Endpoint Mode (Development/Testing):

API endpoint is publicly accessible

Protected by

control_plane_allowed_cidrsIP allowlistNo SSH tunnel required

Terraform auto-discovers endpoint from kubeconfig

Security architecture:

| Access Mode | Control Mechanism | Opens to internet? |

|---|---|---|

| Cluster API (kubectl) |

OCI Bastion Service + bastion_client_allow_list

| No |

| Cluster API (direct) |

API Endpoint NSG + control_plane_allowed_cidrs

| Optional |

| Load Balancer (HTTPS) |

Security List + public_lb_cidrs

| No - restricted to specific IPs |

| SSH to nodes | Not exposed in public security list | No |

Note

The OCI Bastion Service manages its own access control. SSH (port 22) is not opened in the public security list; the bastion service handles access directly.

OCI Load Balancer Backend Node Selection (ingress-nginx)

By default, the OCI Cloud Controller Manager (CCM) adds all cluster nodes

as backends to a Service of type LoadBalancer. This causes health check

and traffic failures on nodes that don't run the target pods (for example,

nodes without ingress-nginx).

To restrict backends to only the nodes running

ingress-nginx pods, this implementation uses the OCI

Classic Flexible Load Balancer with node label selector and

externalTrafficPolicy: Local:

# Applied to ingress-nginx Service via nginx_ingress_sets in main.tf

annotations:

oci.oraclecloud.com/load-balancer-type: lb

service.beta.kubernetes.io/oci-load-balancer-subnet1: <lb_subnet_id>

service.beta.kubernetes.io/oci-load-balancer-security-list-management-mode: "All"

oci.oraclecloud.com/oci-network-security-groups: <lb_nsg_id>

service.beta.kubernetes.io/oci-load-balancer-shape: flexible

service.beta.kubernetes.io/oci-load-balancer-shape-flex-min: "10"

service.beta.kubernetes.io/oci-load-balancer-shape-flex-max: "100"

service.beta.kubernetes.io/oci-load-balancer-backend-protocol: TCP

oci.oraclecloud.com/node-label-selector: k8s-app=logscale-ingress

spec:

externalTrafficPolicy: LocalKey configuration options:

| Annotation/Setting | Value | Purpose |

|---|---|---|

oci.oraclecloud.com/load-balancer-type

| lb | Use Classic Load Balancer (not NLB) |

service.beta.kubernetes.io/oci-load-balancer-security-list-management-mode

| All | CCM manages security list rules |

oci.oraclecloud.com/oci-network-security-groups

| NSG OCID | Attach LB NSG for ingress rules |

service.beta.kubernetes.io/oci-load-balancer-shape

| flexible | Flexible bandwidth (10-100 Mbps) |

service.beta.kubernetes.io/oci-load-balancer-backend-protocol

| TCP | TLS passthrough to nginx-ingress |

oci.oraclecloud.com/node-label-selector

| k8s-app=logscale-ingress | Only ingress nodes as backends |

externalTrafficPolicy

| Local | Traffic only to nodes with pods |

Configuration location:

main.tf (root module)

nginx_ingress_sets = [

{

name = "controller.service.annotations.oci\\.oraclecloud\\.com/load-balancer-type"

value = "lb"

},

{

name = "controller.service.annotations.service\\.beta\\.kubernetes\\.io/oci-load-balancer-subnet1"

value = module.core.lb_subnet_id

},

{

name = "controller.service.annotations.service\\.beta\\.kubernetes\\.io/oci-load-balancer-security-list-management-mode"

value = "All"

},

{

name = "controller.service.annotations.oci\\.oraclecloud\\.com/oci-network-security-groups"

value = module.core.lb_nsg_id

},

{

name = "controller.service.annotations.service\\.beta\\.kubernetes\\.io/oci-load-balancer-shape"

value = "flexible"

},

{

name = "controller.service.annotations.service\\.beta\\.kubernetes\\.io/oci-load-balancer-backend-protocol"

value = "TCP"

},

{

name = "controller.service.annotations.oci\\.oraclecloud\\.com/node-label-selector"

value = "k8s-app=logscale-ingress"

},

{

name = "controller.service.externalTrafficPolicy"

value = "Local"

}

]Troubleshooting load balancer health checks:

| Symptom | Cause | Solution |

|---|---|---|

| Most backends unhealthy / failing health checks | Missing node label selector or wrong externalTrafficPolicy |

Add oci.oraclecloud.com/node-label-selector and set

externalTrafficPolicy: Local

|

| LB created in wrong subnet | Missing subnet annotation |

Ensure

service.beta.kubernetes.io/oci-load-balancer-subnet1

is set

|

| TLS timeout (TCP connects but TLS fails) | LB NSG not attached or Worker NSG missing ingress from LB NSG |

Verify

oci.oraclecloud.com/oci-network-security-groups and

Worker NSG rules

|

Verify that the annotation is applied:

kubectl --context oci-primary get svc dr-primary-nginx-ingress-nginx-controller \

-n logging-ingress -o jsonpath='{.metadata.annotations}' | jq .Request Flow (Internet → LogScale)

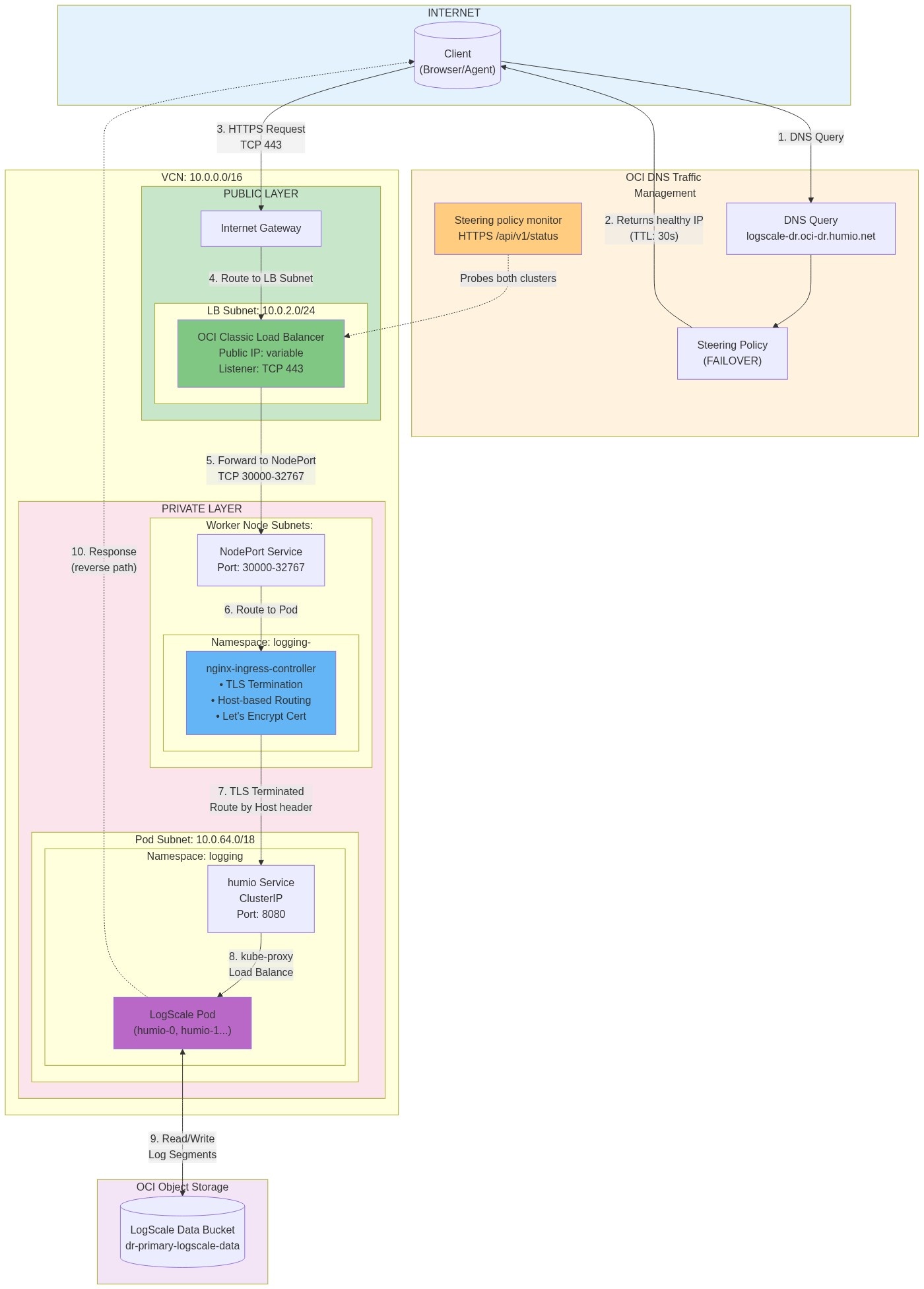

The following diagram illustrates the complete network traffic flow from an external client to LogScale pods, showing each network boundary crossing and the security controls at each layer.

|

Traffic Flow Details:

| Step | Component | Protocol/Port | Security Control | Description |

|---|---|---|---|---|

| 1 | DNS Query | UDP 53 | Public DNS |

Client queries OCI DNS for

logscale-dr.oci-dr.humio.net

|

| 2 | DNS Response | UDP 53 | Steering Policy | Returns healthy cluster IP (primary preferred, TTL 30s) |

| 3 | HTTPS Request | TCP 443 | public_lb_cidrs | Client connects to Load Balancer public IP |

| 4 | IGW Routing | TCP 443 | Route Table | Internet Gateway routes to LB subnet |

| 5 | LB → NodePort | TCP 30000-32767 | Security List egress | LB forwards to nginx-ingress NodePort on worker nodes |

| 6 | NodePort → Pod | TCP (internal) | Worker NSG | Traffic routed to nginx-ingress pod |

| 7 | Ingress Routing | HTTP 8080 | Ingress rules | nginx terminates TLS, routes based on Host header |

| 8 | Service → Pod | TCP 8080 | NetworkPolicy (if configured) | kube-proxy load balances to LogScale pods |

| 9 | Storage Access | HTTPS 443 | IAM Policy | LogScale reads/writes segments to Object Storage |

| 10 | Response | TCP 443 | Stateful | Response follows reverse path to client |

Key Security Boundaries:

Internet → VCN: Controlled by

public_lb_cidrsin Security ListLB → Workers: NodePort range (30000-32767) allowed from VCN CIDR in Worker NSG

Pod-to-Pod: Controlled by Kubernetes NetworkPolicy (if configured)

Pod → Storage: Controlled by OCI IAM policies for Object Storage access

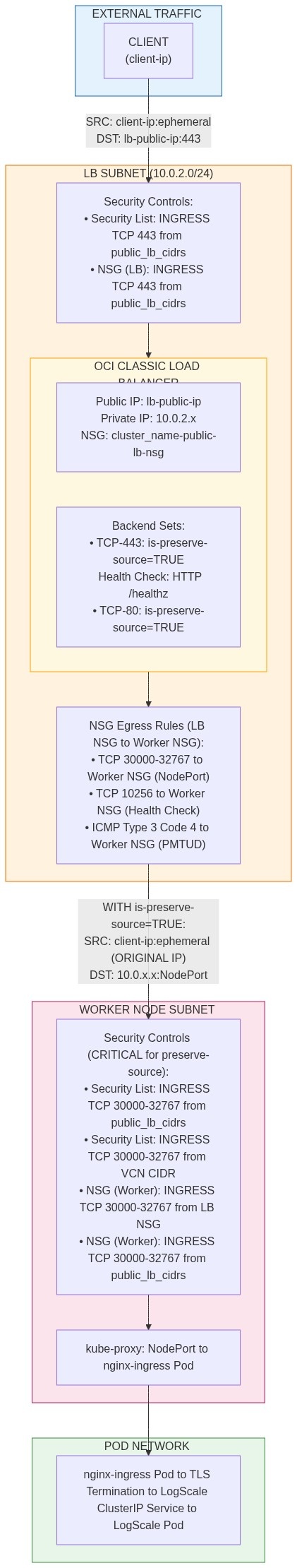

Detailed LB Traffic Flow with Preserve-Source

When is-preserve-source: true is set on the OCI Classic Load

Balancer, the client's original IP address is preserved through the entire

path. This requires special security rules.

|

Source IP Comparison:

| Mode | Source IP at Worker | Use Case |

|---|---|---|

is-preserve-source=TRUE

|

Client's original IP (<client-ip>)

| Client IP logging, geo-routing |

is-preserve-source=FALSE

|

LB's private IP (10.0.2.x)

| Simpler security rules |

Key Points:

is-preserve-source=TRUE

When is-preserve-source=TRUE is enabled on the OCI LB:

Client IP is preserved: Traffic arrives at worker nodes with the ORIGINAL client IP address, not the LB's private IP.

Security rules must allow client IPs: Both the worker NSG and security list must have rules allowing the

public_lb_cidrsto access the NodePort range (30000-32767).Health checks use LB's IP: Health check traffic still uses the LB's private IP as source.

Bidirectional rules required:

LB NSG → Worker NSG (egress rules)

Worker NSG ← LB NSG (ingress rules)

Worker NSG ←

public_lb_cidrs(ingress rules for preserve-source)Worker Security List ←

public_lb_cidrs(ingress rules for preserve-source)

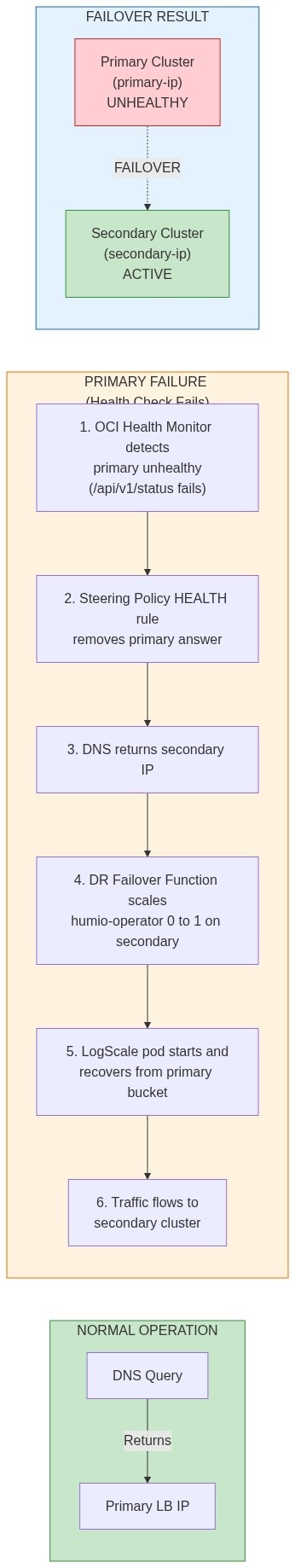

DR Failover Scenario

The diagram below illustrates the network path changes during a DR failover event. When the primary cluster becomes unavailable, the OCI DNS steering policy redirects traffic to the secondary cluster. For the full failover procedure and automation details, see the OCI Disaster Recovery (DR) Operations Guide.

|

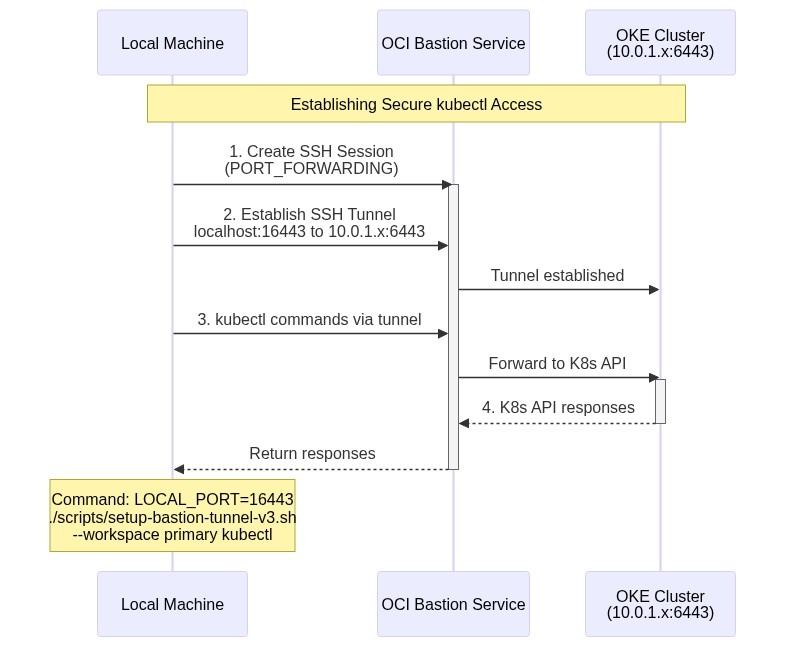

Bastion Access Flow (kubectl)

The diagram below shows how kubectl commands reach the

private Kubernetes API endpoint through the OCI Bastion Service SSH

tunnel. This is the recommended access pattern for production clusters

where provision_bastion=true (default).

|

DR Network Considerations

Note

For the full DR failover procedure including DNS steering policy configuration and automated failover via OCI Functions, see the OCI Disaster Recovery (DR) Operations Guide.

For DR failover to work correctly, ensure:

Both clusters have identical VCN structures - Same subnet CIDRs relative to VCN

Cross-region Object Storage access - IAM policies allow secondary to read primary bucket

Health check accessibility - Health check endpoints can reach both cluster load balancers

DNS propagation - Low TTL (30s) on steering policy for fast failover